Łukasz Augustyniak

Data Scientist / Machine Learning Engineer / AI Consultant

Department of Computational Intelligence, Wroclaw University of Science and Technology

Biography

Łukasz is a Data Scientist / AI Consultant with 8+ years of experience in various ML projects (social media monitoring, call center’s transcriptions analysis, recommendation engines, information extraction from texts, legal texts analysis, and many more).

An award-winning Ph.D. student at the Wroclaw University of Science and Technology is working on artificial intelligence methods to analyze natural language, especially attitude analysis, generating abstract summaries of text collections for many languages, including English and Polish.

Lecturer at scientific and business conferences related to artificial intelligence, lectures given worldwide - Poland, United States, Italy, Great Britain, Belgium, China, Vietnam, Singapore. He actively participated in many national and international scientific projects: ENGINE, RENOIR, PL-Grid, TRANSFORM, cooperating with such research units as Stanford University (USA), Rensselaer Polytechnic Institute (USA), Notre Dame University (USA), Johns Hopkins University (USA), Nanyang Technological University (Singapore), Universidad Carlos III de Madrid (Spain), Jozef Stefan Institute (Slovenia).

He is co-author of many scientific publications in attitude analysis, information retrieval, and spoken language understanding, including those published in prestigious international journals such as Entropy and Neurocomputing. He is a laureate of the governmental TOP 500 Innovators program, within the framework of which he gained knowledge on the commercialization of scientific research at Cambridge and Oxford Universities. Simultaneously, with his computer science studies, he received a master’s degree in law at the University of Wroclaw.

He received MSc in Computer Science from the Wroclaw University of Technology in 2013 with distinction. He also received an MA in Law from Wroclaw University in 2014, and he is still actively interested in the legal aspects of IT and analysis of legal documents using NLP.

He has implemented business projects such as social media monitoring (Brand24), analysis of transcripts in call centers (AVAYA, Spoken Communication), recommendation engines for marketing actions (8thlab, startup), text extraction (MeaningCloud), data analysis in automotive (Edvantis) and many others.

For several years he has also been a consultant on issues related to machine learning and Data Science.

Interests

- Sentiment Analysis

- Information Extraction from Texts

- Legal Text Analysis

- Social Media Monitoring

- ASR Transcriptions Analysis

- Language Modeling

- Recommendation Engines

- IP Law

Education

PhD in Computer Science, Artificial Intelligence

Wroclaw University of Science and Technology

Science - Management - Commercialization, 2015

Cambridge University, UK

Master in Law, 2014

Wroclaw University

MsC in Computer Science (excellent grade), 2013

Wroclaw University of Science and Technology

Skills

AI Solutions Due Diligence

AI Architecture Planning

Conversational AI

Sentiment Analysis

Information Extraction

Python

LegaL NLP

IP Law

Recommendation Engines

Social Media Analytics

Spoken Language Understading

ML Teams Hiring

Experience

Machine Learning Lead

AVAYA

Dialog Systems Lead in Clarin-PL-Biz Project

Wroclaw University of Science and Technology

Head of Data Science

Edvantis

Data Science for Edvantis clients: what they do, how they do it, how Edvantis we can keep improving their products with Data Science.

- defining the data science PoCs, roadmaps, pipelines, productization steps, data strategies,

- creation of new data sciences capabilities for the business by envisioning and executing strategies that will influence the improvement of the business performance by enabling informed decision making,

- building and leading a collaborative ML/DS team,

- developing strategy and methods to ensure data collection, data quality, data annotation,

- presenting results of Data Science processes and dealing directly with C-level stakeholders.

Visiting Researcher

Slovenska tiskovna agencija (STA)

Machine Learning Engineer (Contract)

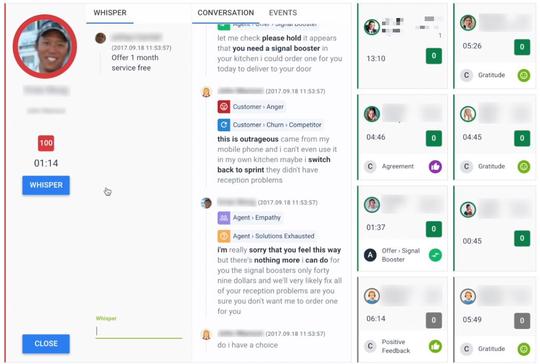

AVAYA

Spoken Communications has been acquired by AVAYA.

Design and implementation of AI-enabled solutions responsible for a reduction of transaction times, improvement of agent’s productivity, and an increase in customer satisfaction. Implementation of state-of-the-art models for spoken language understanding.

Machine Learning Engineer (Contract)

Spoken Communication

Visiting Researcher

Nanyang Technological University

Co-founder & CEO

8th lab sp. z o.o.

Visiting Researcher

Jozef Stefan Institute

Visiting Researcher

Universidad Carlos III de Madrid

Natural Language Processing Engineer

MeaningCloud (Internship)

Research Assistant / Data Scientist

Wrocław University of Science and Technology

Design and implementation of state-of-the-art models for machine learning problems mainly but not only in NLP/NLU area.

I am mostly working in Python if it is also needed in Spark.

I consulted several ML-related projects and technologies via Wrocław University of Science and Technology for startups, banks, venture capitalists, manufacture companies and many more.

Natural Language Processing Engineer

BRAND24

Projects

AI/Data Science Consultancy

Construction Project Health

IoT Signal Analysis for Agricultural Machinery

Creating a Machine Learning-Based Sales Lead Generation System

Vehicle information analysis

Avaya Conversational Intelligence

Work Package Coordinator in RENOIR project

Featured Publications

Comprehensive analysis of aspect term extraction methods using various text embeddings

Recently, a variety of model designs and methods have blossomed in the context of the sentiment analysis domain. However, there is still a lack of wide and comprehensive studies of aspect-based sentiment analysis (ABSA). We want to fill this gap and propose a comparison with ablation analysis of aspect term extraction using various text embedding methods. We particularly focused on architectures based on long short-term memory (LSTM) with optional conditional random field (CRF) enhancement using different pre-trained word embeddings. Moreover, we analyzed the influence on performance of extending the word vectorization step with character embedding. The experimental results on SemEval datasets revealed that not only does bi-directional long short-term memory (BiLSTM) outperform regular LSTM, but also word embedding coverage and its source highly affect aspect detection performance. An additional CRF layer consistently improves the results as well.

Political Advertising Dataset: the use case of the Polish 2020 Presidential Elections

Political campaigns are full of political ads posted by candidates on social media. Political advertisements constitute a basic form of campaigning, subjected to various social requirements. We present the first publicly open dataset for detecting specific text chunks and categories of political advertising in the Polish language. It contains 1,705 human-annotated tweets tagged with nine categories, which constitute campaigning under Polish electoral law. We achieved a 0.65 inter-annotator agreement (Cohen′s kappa score). An additional annotator resolved the mismatches between the first two annotators improving the consistency and complexity of the annotation process. We used the newly created dataset to train a well established neural tagger (achieving a 70% percent points F1 score). We also present a possible direction of use cases for such datasets and models with an initial analysis of the Polish 2020 Presidential Elections on Twitter.

Punctuation Prediction in Spontaneous Conversations: Can We Mitigate ASR Errors with Retrofitted Word Embeddings?

Automatic Speech Recognition (ASR) systems introduce word errors, which often confuse punctuation prediction models, turning punctuation restoration into a challenging task. These errors usually take the form of homonyms. We show how retrofitting of the word embeddings on the domain-specific data can mitigate ASR errors. Our main contribution is a method for better alignment of homonym embeddings and the validation of the presented method on the punctuation prediction task. We record the absolute improvement in punctuation prediction accuracy between 6.2% (for question marks) to 9% (for periods) when compared with the state-of-the-art model.

WER we are and WER we think we are

Natural language processing of conversational speech requires the availability of high-quality transcripts. In this paper, we express our skepticism towards the recent reports of very low Word Error Rates (WERs) achieved by modern Automatic Speech Recognition (ASR) systems on benchmark datasets. We outline several problems with popular benchmarks and compare three state-of-the-art commercial ASR systems on an internal dataset of real-life spontaneous human conversations and HUB'05 public benchmark. We show that WERs are significantly higher than the best reported results. We formulate a set of guidelines which may aid in the creation of real-life, multi-domain datasets with high quality annotations for training and testing of robust ASR systems.

WordNet2Vec: Corpora agnostic word vectorization method

The complex nature of big data resources requires new structuring methods, especially for textual content. WordNet is a good knowledge source for the comprehensive abstraction of natural language as it offers good implementation for many languages. Since WordNet embeds natural language in the form of a complex network, a transformation mechanism, WordNet2Vec, is proposed in this paper. This creates vectors for each word from WordNet. These vectors encapsulate a general position — the role of a given word related to all other words in the given natural language. Any list or set of such vectors contains knowledge about the context of its components within the whole language. This type of word representation can be easily applied to many analytic tasks such as classification or clustering. The usefulness of the WordNet2Vec method is demonstrated in sentiment analysis including the classification of an Amazon opinion text dataset with transfer learning.

Comprehensive study on lexicon-based ensemble classification sentiment analysis

We propose a novel method for counting sentiment orientation that outperforms supervised learning approaches in time and memory complexity and is not statistically significantly different from them in accuracy. Our method consists of a novel approach to generating unigram, bigram and trigram lexicons. The proposed method, called frequentiment, is based on calculating the frequency of features (words) in the document and averaging their impact on the sentiment score as opposed to documents that do not contain these features. Afterwards, we use ensemble classification to improve the overall accuracy of the method. What is important is that the frequentiment-based lexicons with sentiment threshold selection outperform other popular lexicons and some supervised learners, while being 3-5 times faster than the supervised approach. We compare 37 methods (lexicons, ensembles with lexicon’s predictions as input and supervised learners) applied to 10 Amazon review data sets and provide the first statistical comparison of the sentiment annotation methods that include ensemble approaches. It is one of the most comprehensive comparisons of domain sentiment analysis in the literature.